In December 2020, an Australian computational linguist named Sam Blake, working with American software developer David Oranchak and Belgian programmer Jarl Van Eycke, announced that they had broken the Z-340 cipher — a 340-character cipher mailed by the Zodiac Killer to the San Francisco Chronicle in November 1969 and unsolved for fifty-one years. The team’s tools combined a custom-built specialised algorithm with computational searches across millions of possible reading paths. The breakthrough was confirmed by the FBI within weeks. The decryption did not solve the underlying murders; the message itself contained no identifying information. But it did demonstrate something significant about how the playing field for historical cipher work has shifted in the past decade.

This piece looks at where machine learning and computational cryptanalysis have made measurable progress on historical ciphers, where progress has been more limited than press coverage suggested, and what the current state of the most famous unsolved manuscripts looks like in 2026. The aim is realistic assessment, not techno-optimism.

The Z-340 breakthrough

The Z-340 had been the subject of attempted solutions since the day it arrived. The simpler Z-408 cipher, sent by the same correspondent in 1969, had been broken within a week by amateur codebreakers Donald and Bettye Harden using classical frequency analysis. The Z-340, by contrast, resisted the same approach because the underlying cipher used a different and more complex method.

The 2020 solution required two innovations. First, the team developed software (Oranchak’s AZdecrypt, Van Eycke’s solver) capable of efficiently searching the space of possible diagonal reading paths. Second, they identified that the cipher had been written using a transposition step in addition to homophonic substitution — meaning the letters of the cleartext had been rearranged before substitution. The decrypted message, which begins « I HOPE YOU ARE HAVING LOTS OF FUN IN TRYING TO CATCH ME », does not identify the killer.

What the case demonstrated was that the modern combination of computational power, sophisticated algorithm design and amateur perseverance can solve cipher problems that resisted decades of work. The technique was not « AI » in the modern large-language-model sense; it was specialised cryptanalytic software run for substantial periods of time on commodity hardware. The distinction matters for understanding what is achievable.

The Copiale Cipher

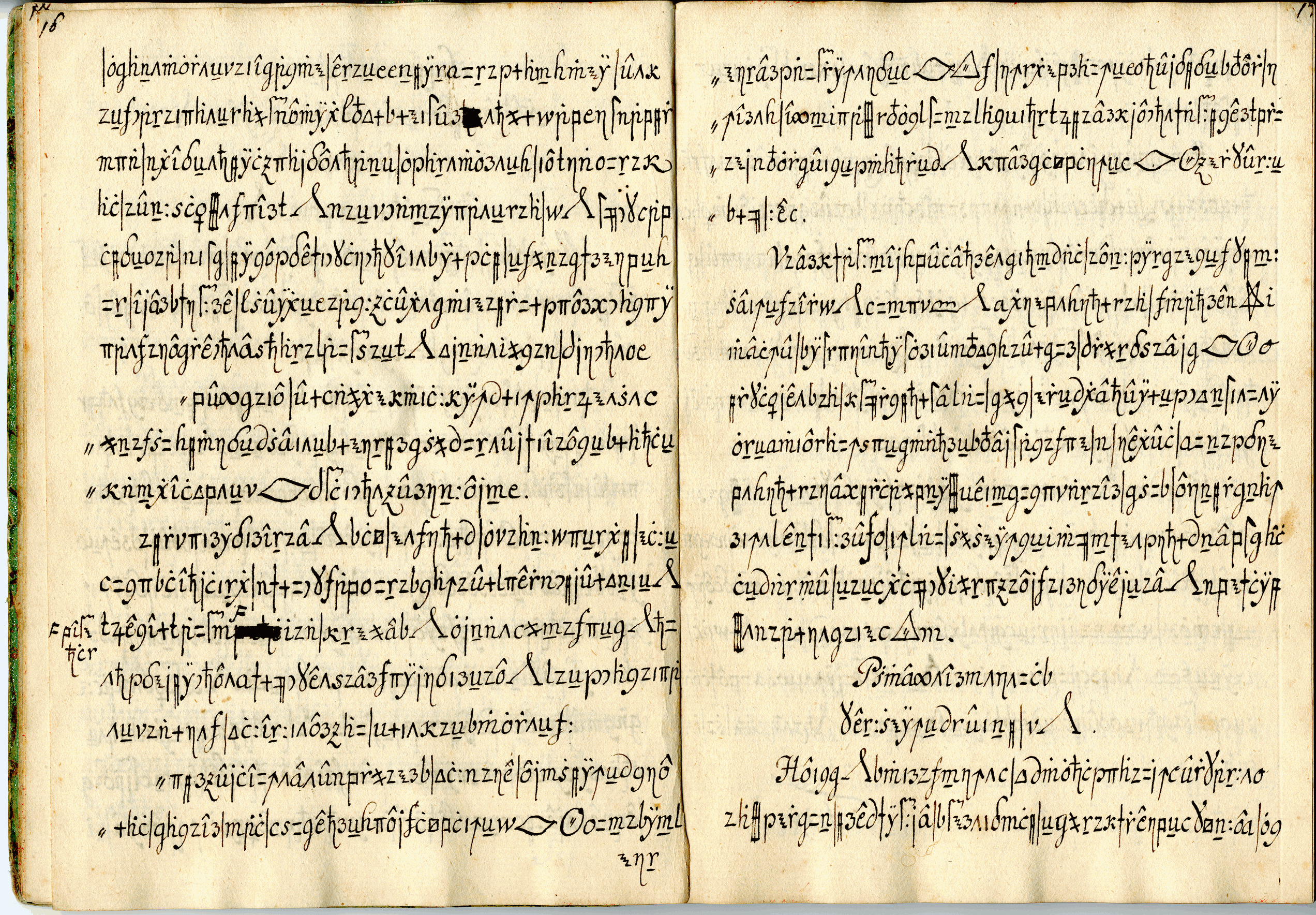

The Copiale cipher, a 75,000-character document discovered in an East Berlin archive after the fall of the Berlin Wall, was decrypted in 2011 by a team led by Kevin Knight at the University of Southern California, Christiane Schaefer at Uppsala University, and Beata Megyesi. The cipher, written in a mixture of Latin letters, Greek letters and abstract symbols, turned out to encode a German-language ritual document of an eighteenth-century secret society known as the High Enlightened Oculist Order.

The decryption combined statistical analysis (suggesting the underlying language was likely German), targeted hypothesis testing about which symbols mapped to which letters, and substantial linguistic and historical research about the likely context. The work pioneered some of the techniques later used in the Zodiac Z-340 effort. Knight’s team continued to work on similar manuscripts after the Copiale success, including substantial progress on the Borg cipher, an Italian-language ecclesiastical text from the seventeenth century.

What machine learning has actually contributed

The role of machine learning in these breakthroughs is more nuanced than press coverage usually conveys. Modern cryptanalytic software uses statistical and search-based methods that are not, in the strict sense, machine learning. They are specialised algorithms applied to constrained problems. True machine learning approaches, particularly large neural language models, have made surprising contributions in three specific areas.

The first is language identification: given a sequence of cleartext fragments, modern language models can identify the source language more reliably than older n-gram methods, which helps narrow hypotheses about a partially-broken cipher. The second is plausibility scoring: when a candidate decryption is generated, neural models can assign probability scores that distinguish coherent text from random noise more sensitively than traditional letter-frequency tests. The third is generation of candidate plaintexts that match constraints from the cipher, which has applications in iterative decryption.

What machine learning has not done, despite repeated claims, is independently solve any major historical cipher. Every documented breakthrough in the past decade has involved substantial human cryptanalytic insight at the core, with computational tools playing a supporting role.

The Voynich Manuscript

The Voynich Manuscript, an early-fifteenth-century codex of approximately 240 vellum pages written in an unknown script with botanical, astronomical and human-figure illustrations, remains the most famous unsolved historical document. Carbon dating in 2009 established the parchment as fifteenth-century origin (roughly 1404-1438), which definitively ruled out hypotheses involving more recent forgery. The manuscript’s text contains approximately 35,000 words written in characters that do not match any known script.

Despite numerous announced « solutions » — by Stephen Bax in 2014, by Gerard Cheshire in 2019, and by various others — none has been accepted by the broader scholarly community. The Cheshire claim, that the Voynich was written in an extinct « proto-Romance » language, was particularly criticised by Romance linguists, who argued that the proposed language did not match the historical linguistic record.

The most rigorous current scholarship treats the manuscript as either an unidentified language, an enciphered known language using a method not yet identified, or possibly a sophisticated hoax (though the carbon dating substantially raises the cost of any hoax hypothesis). The 2024 collaborative effort coordinated by the Beinecke Library at Yale, where the manuscript currently resides, has continued to release high-resolution scans and to collaborate with cryptanalytic researchers including Lisa Fagin Davis and others.

Machine learning approaches to the Voynich, including a 2018 University of Alberta paper by Greg Kondrak that suggested Hebrew as the underlying language, have not been broadly accepted. The fundamental difficulty is that without prior knowledge of the cipher method or the underlying language, statistical approaches have very limited traction.

The Beale ciphers

The Beale ciphers, three encrypted documents published in an 1885 pamphlet purporting to describe the location of buried treasure in Bedford County, Virginia, have an unusual evidentiary status. One of the three (the second) was decrypted in the late nineteenth century using the United States Declaration of Independence as a key, and describes the supposed treasure. The first and third remain undecrypted.

The case is unusual because the second cipher’s solution provides a definite test: if the underlying treasure existed, it should be findable. No treasure has ever been found at the locations suggested by the cleartext, and substantial historical investigation has cast doubt on the entire pamphlet’s narrative. Most modern academic cryptanalysts treat the Beale ciphers as a probable nineteenth-century hoax, though the unsolved first and third documents remain technically unsolved.

Machine learning approaches to the Beale ciphers have produced no progress beyond what was achievable with classical methods, which is consistent with the pattern: where there is no underlying message, no cryptanalytic technique can produce one.

Kryptos and modern designed challenges

Some historical-style cipher challenges have been deliberately designed by modern cryptographers, partly to remain unsolved. The Kryptos sculpture by Jim Sanborn, installed at CIA headquarters in 1990, contains four enciphered passages. Three were solved by the early 2000s, primarily by James Gillogly at RAND and a separate solution by NSA cryptographer Edward Scheidt. The fourth section, K4, of 97 characters, remains unsolved despite three decades of work and substantial public attention.

Sanborn has periodically released hints about K4 (in 2010, 2014 and 2020), and the partial cleartext segments revealed by these hints have somewhat constrained the search space without enabling solution. The case is interesting precisely because Sanborn was a sculptor, not a professional cryptographer, and constructed K4 in collaboration with Scheidt — yet the resulting cipher has resisted decades of expert and amateur attack.

What is realistic to expect

Three honest predictions for the next decade of cipher work:

- More mid-difficulty historical ciphers will be solved. Documents in the difficulty class of the Copiale and the Z-340 — homophonic substitution with possible transposition, in known underlying languages — are likely to fall to combined human-machine effort over the next ten to fifteen years.

- The Voynich Manuscript probably remains unsolved. Without independent linguistic information, statistical approaches face fundamental limits.

- True breakthroughs will be human-led, machine-assisted. No purely AI-generated cipher solution to a major historical document has occurred, and the structural reasons make such a development unlikely in the near term.

The wider methodological lesson

The history of historical-cipher work suggests that the most productive investigations combine deep knowledge of the cipher class, reasonable hypotheses about the underlying language and historical context, computational tools applied to constrained problems, and persistent effort over years. None of these elements alone is sufficient. The modern improvement is in the computational tools, but the human expertise and contextual knowledge remain irreplaceable.

This is a useful corrective to broader narratives about AI replacing expert work. In one of the few domains where AI capability can be tested against persistently hard, well-defined problems with verifiable outcomes, the result is supplementation rather than replacement.

The cryptanalytic community in 2026

The community of working cryptanalysts who engage with historical ciphers has expanded substantially since 2010, partly through online forums and partly through dedicated academic and amateur research networks. The American Cryptogram Association, founded in 1929, continues to publish the bimonthly journal The Cryptogram and hosts annual conventions where cryptanalysts present work on both classical and historical ciphers. The European cryptanalytic community is more dispersed but includes substantial activity at the University of Mannheim’s HistoCrypt programme and the Bletchley Park Trust’s research initiatives in the UK.

The blog Cipher Mysteries, maintained by Nick Pelling since 2008, serves as one of the most active English-language venues for sharing work on unsolved historical ciphers. The forum has been particularly important in coordinating amateur work on the Dorabella, Tamam Shud and Voynich cases. Several other specialised forums, including the unsolved-ciphers section of Reddit and various academic mailing lists, provide additional infrastructure for collaborative work.

The Dorabella Cipher and other minor mysteries

Beyond the famous cases above, several smaller historical ciphers have remained unsolved for periods that test the limits of available cryptanalytic methods. The Dorabella cipher, an 87-character message written by composer Edward Elgar to his friend Dora Penny in 1897, has resisted attempted decryption for more than a century despite its short length. The cipher uses sets of curved-line glyphs apparently derived from a substitution alphabet, but no published solution has gained scholarly consensus. Tony Gaffney’s 2007 partial decryption gained some attention but did not resolve the message definitively.

The Smithy Code, a 117-character cipher embedded by Justice Peter Smith in his 2006 judgment in Baigent & Leigh v. Random House Group, was solved within months of publication — the only relatively recent designed cipher to fall quickly to amateur cryptanalysis. The case is a useful counter-example to the general pattern: a cipher with a relatively standard substitution structure, designed by a non-specialist, can be broken quickly even without machine learning assistance.

The Tamam Shud case, the unsolved 1948 death of an unidentified man on Somerton Beach in Adelaide, Australia, includes a five-line cipher discovered in a copy of Omar Khayyam’s Rubaiyat linked to the deceased. The cipher has resisted attempted decryption for more than seventy-five years. The 2022 forensic identification of the deceased as Carl Webb by genealogist Colleen Fitzpatrick using DNA analysis closed part of the case but did not resolve the cipher. Modern computational analyses of the five-line message suggest it may be the first letters of words in a longer text, possibly an intelligence-related book code, but no specific source text has been identified.

Computational methods, in detail

For readers interested in the technical details of how cipher solving has changed, several specific algorithmic developments deserve mention. The hill-climbing algorithm, applied to homophonic substitution ciphers since the 1990s, has been substantially refined in software including Oranchak’s AZdecrypt. The algorithm starts with a random key, tries small modifications, and accepts modifications that improve the n-gram score of the candidate decryption. Modern implementations run thousands of restarts in parallel and use sophisticated scoring functions trained on language-specific corpora.

Simulated annealing, applied to similar problems, allows the search to occasionally accept worse solutions to escape local optima. The technique is particularly useful for ciphers with multiple substitution stages or transposition components. The Z-340 solution combined hill climbing with explicit search across diagonal reading paths, which is a relatively recent algorithmic insight applied to homophonic ciphers.

For statistical analysis of the underlying language, modern cryptanalysts use n-gram models trained on extensive corpora — typically 4-grams to 6-grams of letter sequences in the candidate underlying language. The Stanford Cryptanalysis Toolkit and the open-source CipherTools package both provide these capabilities to amateur researchers, which has substantially democratised serious cryptanalytic work.

Misconceptions about AI and ciphers

Several common misconceptions about AI and historical ciphers persist in press coverage. The first is that large language models can decrypt historical ciphers directly. They cannot, in general. Large language models are trained on cleartext language data and lack the specialised search and substitution capabilities required for cryptanalysis. They can sometimes assist with plausibility scoring or candidate generation, but the core decryption work still requires specialised algorithms.

The second is that AI has solved the Voynich Manuscript. It has not. Several papers have proposed AI-based interpretations, but none has produced a translation that other scholars accept as coherent. The Voynich’s resistance to decryption may reflect genuine novelty in the cipher method, an underlying language not in any current corpus, or the possibility that the document is a sophisticated nonsense text designed to look meaningful. Each of these possibilities is consistent with the failure of current methods.

The third misconception is that the cipher work is now mostly automated. The 2020 Z-340 breakthrough required substantial human cryptanalytic insight, particularly the recognition that the cipher used a transposition step in addition to homophonic substitution. The computational tools accelerated the work but did not replace the cryptanalytic insight. Across documented cipher breakthroughs of the past two decades, the human contribution remains structurally essential.

Practical guidance for amateur cryptanalysts

For readers interested in attempting cipher work seriously, several practical recommendations follow from the cases above. First, work on ciphers with definite known plaintext languages and reasonably constrained methods. Working on the Voynich Manuscript without a clear underlying-language hypothesis is unlikely to produce results, while working on lesser-known nineteenth-century homophonic ciphers in known languages is much more tractable.

Second, learn the standard cryptanalytic toolkit before attempting modern computational methods. Frequency analysis, contact analysis, period detection for polyalphabetic ciphers, and Kasiski examination remain essential. The classical references — David Kahn’s The Codebreakers, Helen Fouché Gaines’s Cryptanalysis — remain the standard introductory texts and are worth reading carefully before applying machine-learning approaches.

Third, document attempts thoroughly and share results with the established cryptanalytic community. Several smaller cases have been broken by amateur work shared through forums including the National Cryptologic Foundation’s online discussion groups and the Cipher Mysteries blog maintained by Nick Pelling. The community has developed useful conventions for sharing partial results and verifying claims.

Further reading

For a general overview, the Wikipedia entry on the Voynich Manuscript is reasonably current. The Beinecke Rare Book and Manuscript Library at Yale University maintains the official manuscript and a searchable digital archive. The National Security Agency National Cryptologic Museum publishes historical material on classical and machine ciphers, including substantial archives on the Enigma, Purple and Lorenz machines. Our notes on cryptography and unsolved documents are filed at phénomènes étranges, with broader unexplained-history material at mystères inexpliqués, and a separate thread on historical intelligence covering related cryptanalytic and signals-intelligence cases.

This article is for informational purposes and reflects publicly available cryptanalytic research as of early 2026; the field develops continuously, and readers should consult primary academic sources for the most current state of any specific case.

Votre analyse